Analysts criticized an earlier draft of the regulations released in April as deeply unfriendly to the industry. Some requirements, they said, like that companies should verify the accuracy of the data their AI models learned on — which in many cases includes huge chunks of text from the internet like Reddit and Wikipedia, both banned in China — would be nearly impossible to comply with.

I find it insane how ensuring the input’s accuracy is something they balk at.

In chatting with Ernie, Chinese search giant Baidu’s prototype AI chatbot, The Washington Post found that even simple requests for facts about China’s government or top leader Xi prompted it to terminate the exchange with a canned reply.

Analysts criticized an earlier draft of the regulations released in April as deeply unfriendly to the industry. Some requirements, they said, like that companies should verify the accuracy of the data their AI models learned on — which in many cases includes huge chunks of text from the internet like Reddit and Wikipedia, both banned in China — would be nearly impossible to comply with.

I find it insane how ensuring the input’s accuracy is something they balk at.

Exactly.

It’s like uh they could just use verified sources to begin with instead of user edited stuff mainly staffed by the CIA and corporate influence peddlers? Like maybe China has a state encyclopedia, volumes of academic works that have been peer reviewed.

It’s maddening that this is seen as a burden. Go back to conceptions of intelligent machines in like the 60s through 80s and many of the futurists there had them being carefully taught by ingesting written works separated into fiction and non-fiction, being taught by teachers, etc. These lazy western companies like chatgpt just want to skip all the hard work of actually making machines that have and can give correct answers, they want to skip to the finish line to collect the money and paper over and correct after the fact to the extent they can models built on falsity only as problems appear. China’s approach to make sure the models are built correctly off of only good data to start with will likely be better to manage down the road.

the appeal aka hype of all the current AI is to they are able to ripped the fruit of free labor by scraping user generated data. Having to curate the data means they will have to spend resources on real employees which will cut into their profit so of course the capitalist gonna cry fault.

Earnest Ernie

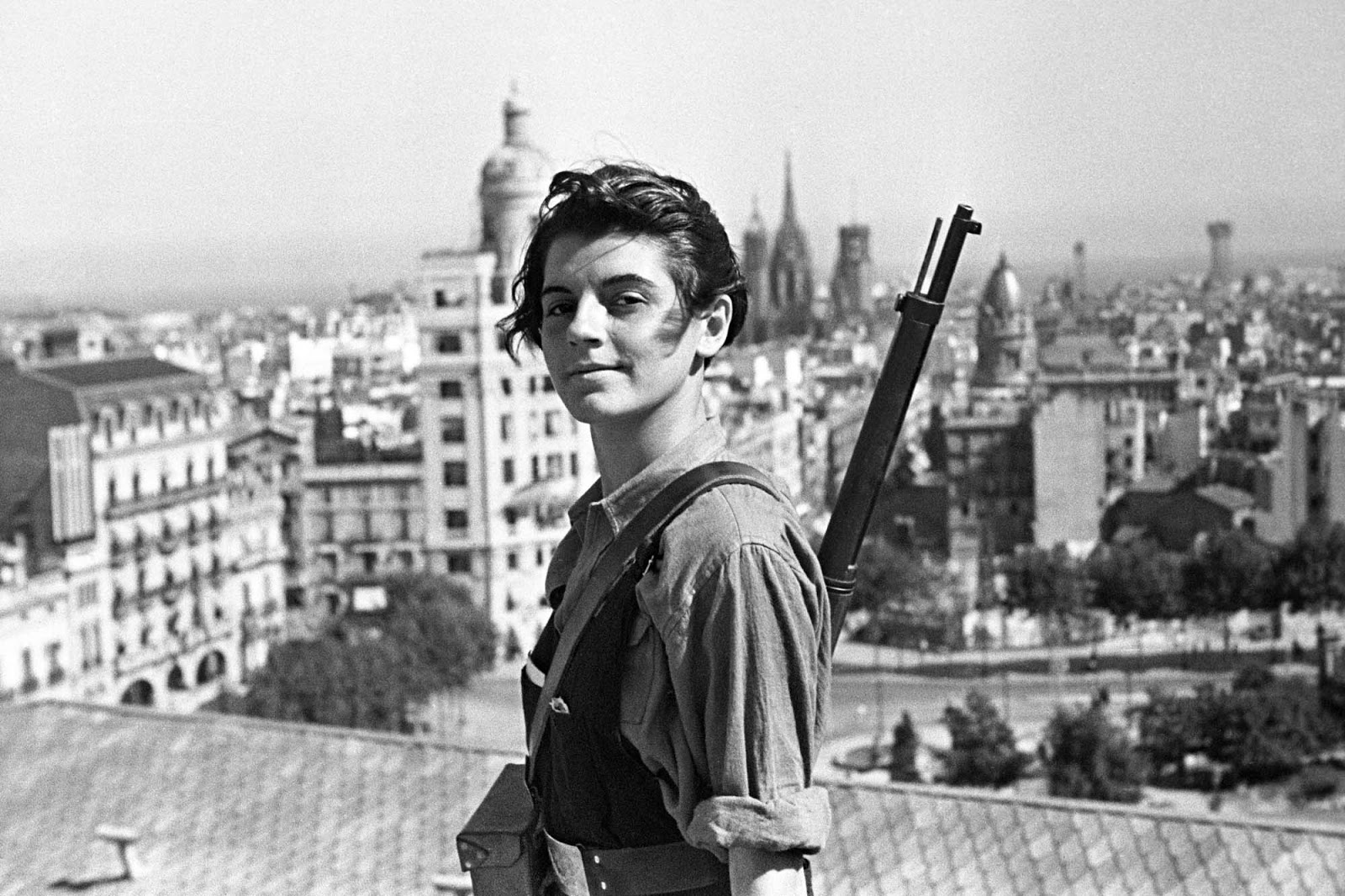

Chinese cyberwave 🤝 Juche Necromancy

That sounds like the best premise for a TTRPG I have ever heard.

Would use all my xi bucks for it

What does that even mean, though? It seems they’re basically just committing to safe and reasonable technological development. Yeah, that’s impossible under capitalism because stock line must go up, but it seems like they’re conflating reviving Karl Marx as an AI with just not letting their chatbots spout nonsense.

To be fair, I have no idea how the AI world looks like in China, but elsewhere AI can mean anything from a linear regression to complex logical inference systems. With how shallow the newscorp coverage of their developments is, I’m never even sure if they’re talking about LLMs or just automated logistics tools.

“This is a pretty significant set of responsibilities, and will make it hard for smaller companies without an existing compliance and censorship apparatus to offer services,” said Toner.

As if smaller companies ever had a chance with competing on this area in the first place, with the sheer amount of data and infrastructure required to put something like that online. Even the biggest companies need to do shit like this.

One result could be that in ten years China’s internet will still be… the internet, with all the strengths and shortcomings that entails. Meanwhile the internet in western countries will have devolved into an increasingly unusable and useless mess of AI-generated content. Like Quora, but everywhere and worse.

Can’t wait for AI generated MCU movies rehashing Avengers 2. But yeah, China seems to be on the right track on this one. I just think that a lot of their international reception over AI is very muddled by the stock exchange AI hype train, when we really just need some cool image processing techniques and some great optimization discovery like Soviet Linear Programming. Fancy chatbots and “content generators” should be the last priority, though at least nobody is being made homeless to fuel their ones.

Man, that article on Kantorovich is fascinating – imagine the possibilities. I wonder if this will be remembered as the century in which computing in socialist countries really pulls ahead. (Imagine future historians saying of the American and western European economies that “they failed because they were unable to adequately adapt to the computer revolution”).

I wonder what Yugoslavia would’ve been up to these days. Iskra Delta had some pretty sophisticated designs going on with the Partner and Triglav from what I can tell.

It’d be kinda dope if the AI uprising was also an indefatigable global Maoist one

Probably not.

Right now, a major requirement for AI seems to be a large body of training data. Scraping Reddit is a great way to do that, curating a body of verifiable information isn’t.

The AI overlord is going to have the knowledge and temperment of an average Redditor.

The AI overlord is going to have the knowledge and temperment of an average Redditor.

That’s one of the most frightening things I’ve heard all week

Slight correction and further info on this:

Although it’s theoretically possible someone could train a language model on Reddit alone, I’m not aware of any companies or researchers who have. The closest equivalent may be Stable LM, a language model that was panned for producing incoherent output and some mocked it for using Reddit as something like 50-60% of its dataset, tho it was also made clear that their training process was a mess in general.

How a language model talks and what it can talk about is an issue with some awareness already, though the actions taken so far, at least in the context of the US, are about what you would expect. OpenAI, one of the only companies with enough money to train its own models from scratch and one of the most influential, bringing language models into public view with ChatGPT, took a pretty clearly “decorum liberal” stance on it, tuning their model’s output over time to make it as difficult as possible for it to say anything that might look bad in a news article, with the end result being a model that sounds like it’s wearing formal clothing at a dinner party and is about to lecture you. And also unsurprisingly, part of this process was capitalism striking again, with OpenAI traumatizing underpaid Kenyan workers through a 3rd party company to help filter out unwanted output from the language model: https://www.vice.com/en/article/wxn3kw/openai-used-kenyan-workers-making-dollar2-an-hour-to-filter-traumatic-content-from-chatgpt

Though I’m not familiar enough on the details with other companies, most other language models produced from scratch have followed in OpenAI’s footsteps, in terms of choosing “liberal decorum” style tuning efforts and calling it “safety and ethics.”

I also know limited about alignment (efforts to understand what exactly a language model is learning, why it’s learning it, and what that positions it as in relation to human goals and intentions). But from what I’ve seen in limited relation to it, on the most basic level of “trying to make sure output does not steer toward downright creepy things” has to do with careful curation of the dataset and lots of testing at checkpoints along the way. A dataset like this could include Reddit, but it would likely be a limited part of it, and as far as I can tell, what matters more than where you get the data is how the different elements in the dataset balance out; so you include stuff that is maybe repulsive and you include stuff that is idyllic and anywhere in-between, and you try to balance it in such a way that it’s not going to trend toward repulsive stuff, but it’s still capable of understanding the repulsive stuff, so it can learn from it (kind of like a human).

None of this tackles a deeper question of cultural bias in a dataset, which is its own can of worms, but I’m not sure how much can be done about that while the method of training for a specific language means including a ton of data that is rife with that language’s cultural biases. It may be a bit cyclical in this way, in practice, but to what extent is difficult to say because of how extensive a dataset may be and factoring in how the people who create it choose to balance things out.

Edit: mixed up “unpaid” and “underpaid” initially; a notable difference, tho still bad either way

Unless you nurture and care for the AI and treat it like you would a person.

Yeah, American AI makers aren’t going to do that.

I’m very aware. Hopefully China will. But still, that kind of A.I. is still years, if not decades away.

Paywall bypass: https://archive.is/7axVU

I despise paywalls, especially on news articles.

Don’t they say that about everything?

pretty much, still a feel good story though :)

Has Russia done anything AI related? If I can get a US-made AI, a Chinese AI and a Russian AI to torment me for the rest of eternity we’re most of the way to AM.

deleted by creator