To be fair, the protein folding thing is legitimately impressive and an actual good use for the technology that isnt just harvesting people’s creativity for profit.

The way to tell so often seems to be if someone has called it AI or Machine Learning.

AI? “I put this through chatgpt” (or “The media department has us by the balls”)

ML? “I crunched a huge amount of data in a huge amount of ways, and found something interesting”

Actually I endorse the fact that we are less shy of calling “AI” algorithms that do exhibit emergent intelligence and broad knowledge. AI uses to be a legitimate name for the field that encompasses ML and we do understood a lot of interesting things about intelligence thanks to LLMs nowadays, like the fact that training on next-word-prediction is enough to create pretty complex world models, that transformer architectures are capable of abstraction or that morality arise naturally when you try to acquire all the pre-requisites to have a normal discussion with a human.

Yeah my class in college was just called Artificial Intelligence

omg what is even the point of scientific progress and the advancement of human knowledge unless one specific person gets all the glory. What is science even for if not the validation of some human’s individual ego.

I mean, what are most of us doing ever at all for all of history if not validating our individual egos in one way or another?

What is science even for if not the validation of some human’s individual ego.

Satisfaction of personal curiosity with goverment funding

the technology that isnt just harvesting people’s creativity for profit.

It’s the system harvesting people’s creativity for profit. Capitalism did it, capitalism does that, capitalism will always do that. In best case. Otherwise it will harvest people entirely.

The whole “all AI bad” is disconnected and primitivism.

John J. Hopfield work is SCIENCE with caps. A decade of investigations during the 80s when computational power couldn’t really do much with their models. And now it has been shown that those models work really good given proper computational power.

Also not all AI is generative AI that takes money out of fanfic drawers pockets or an useless hallucinating chatbot. Neural networks are commonly used in science as a very useful tool for many tasks. Also image recognition is nowadays practically a solved issue thanks to their research. Proteins folding. Dataset reduction. Fluent text to speech. Speech recognition… AI may be getting more track nowadays because the generative AIs (that also have their own merit, like or not) but there is much more to it.

As any technological advance there are shitty use cases and good use cases. You cannot condemn a whole tech just for the shitty uses of some greedy capitalists. Well… you can condemn it. But then I will classify you as a primitivist.

Scientific theory that resulted in practical applications useful to people is why the nobel prize was created to begin with. So it is a well given prize. More so than many others.

Agreed. Which is why we should call it Machine Learning (or Data Science) and continue to torch OpenAI until it is no more.

‘AI hate’ is usually connected with insane claims like ‘we have “reasoning” model’.

That shit needs to die in fire.

I’m still waiting for the full-planet weather model. That will be something.

I’m still waiting for the full-planet weather model. That will be something.

That’s going to be a hard one, given that past weather patterns are increasingly not predictive of future weather patterns, because something keeps dumping CO2 into the atmosphere and raising the global temperature

Ooooopsieeees it was me 🙈

Generative AI is really causing a negative association with AI in general to the point where a proper rebranding is probably in order.

Generative AI is part to AI. And it has its own merits. Very big merits. Like or not it is a milestone on the field. That it is mostly hated not because it doesn’t work but because it does.

If generative AI could not create images the way it does I assure you we wouldn’t have the legion of etsy and patreon painters complaining about it.

The nobel prize is not to generative AI, of course, it’s about the fathers of the fields and their complex neural networks that made most advanced since then possible.

Let’s start by not calling it AI anymore. Cause it isn’t.

It has been called like that since the 50s were it could do literally nothing because computer power wasn’t enough. It is the field that leads to an artificially created intelligence. We never had any issues with the name. No need for a rebrand.

What we call AI today is also not going to evolve into an actual AI.

You can call the field of research what you want, but the current products are not AI. Do you also call potatoes vodka?

You sir or madam give me hope that there are still reasonable people on the internet. Well written.

Wait, are you an AI bot defending itself…?

Luckily genAI isn’t good enough to sound this real yet… I hope

Oh, sure, Mr. Enshittifist, you can call me that.

I don’t get the ai hate sentiment. In fact I want ai to be so good that it steals all our jobs. Every single “worker” on the planet. The only job I don’t think they can steal is that of middle management because I don’t think we have digitized data on how to suck your own dick. After everybody is jobless, then we would be free. We won’t need the rich. They can be made into a fine broth.

Sarcasm aside, I really believe we should automate all menial jobs, crunch more data and make this world a better place, not steal creative content made by humans and make second rate copies.

The problem with AI isn’t the tech itself. It’s what capitalism is doing with it. Alongside what you say, using AI to achieve fully automated luxury gay space communism would be wonderful.

Maybe problem is, you know, capitalism?

As always!

Just noticed… Did I reply to wrong comment? I wanted to use this as reply to one with “AI is bad” and without “capitalism makes it bad”.

Derp.

I don’t know if you’ve been paying attention to everything that’s happened since the industrial revolution but that’s not how it’s going to work

The problem is that it will be the rich that are the owners of the AI that stole your job so suddenly we peasants are no longer needed. We won’t be free, we will be broth.

Then you have a choice.

Option 1. Halt scientific and technological progress and be robbed anyway because if capitalists do not get more money out of tech they are getting it out of making you work more hours for less money.

Option 2. End capitalism.

I vote option 2

Option 3. Get a job as part of the 5% of the population still employed in serving the various security apparatuses protecting the rich fucks – you could be a soldier, cop, or government official.

Better to be part of the boot than be the poor fuckers getting stepped on, right? You can sleep easily knowing you have it slightly better than the other 95% of the underclass.

Well you see 100 people won’t be able to make soup of trillions. But you know what we a trillion people can do? Run the guillotine for a 100 times

Well you see 100 people won’t be able to make soup of trillions.

You should check out the climate catastrophe sometime.

Had me in the first half, not gonna lie

I don’t get the ai hate sentiment.

I don’t get what’s not to get. AI is a heap of bullshit that’s piled on top of a decade of cryptobros.

it’s not even impressive enough to make a positive world impact in the 2-3 years it’s been publicly available.

shit is going to crash and burn like web3.

I’ve seen people put full on contracts that are behind NDAs through a public content trained AI.

I’ve seen developers use cuck-pilot for a year and “never” code again… until the PR is sent back over and over and over again and they have to rewrite it.

I’ve seen the AI news about new chemicals, new science, new _fill-in-the-blank and it all be PR bullshit.

so yeah, I don’t believe AI is our savior. can it make some convincing porn? sure. can it do my taxes? probably not.

You are ignoring ALL of the of the positive applications of AI from several decades of development, and only focusing on the negative aspects of generative AI.

Here is a non-exhaustive list of some applications:

- In healthcare as a tool for earlier detection and prevention of certain diseases

- For anomaly detection in intrusion detection system, protecting web servers

- Disaster relief for identifying the affected areas and aiding in planning the rescue effort

- Fall detection in e.g. phones and smartwatches that can alert medical services, especially useful for the elderly.

- Various forecasting applications that can help plan e.g. production to reduce waste. Etc…

There have even been a lot of good applications of generative AI, e.g. in production, especially for construction, where a generative AI can the functionally same product but with less material, while still maintaining the strength. This reduces cost of manufacturing, and also the environmental impact due to the reduced material usage.

Does AI have its problems? Sure. Is generative AI being misused and abused? Definitely. But just because some applications are useless it doesn’t mean that the whole field is.

A hammer can be used to murder someone, that does not mean that all hammers are murder weapons.

Imaginary and unproven cases without any apparent “intelligence”.

Seriously… Fall detection? It’s like 3 lines of code… Disaster relief? Show me any actual evidence of “AI” being used…

These are not imaginary uses cases. Are you generally critical of technology?

give me at least two peer reviewed articles that AI has had a measurably positive impact on society over the last 24 months.

shouldn’t be too hard for AI to come up with that, right?

if you can do that then I’ll admit that AI has potential to become more than a crypto scam.

He’s already given you 5 examples of positive impact. You’re just moving the goalposts now.

I’m happy to bash morons who abuse generative AIs in bad applications and I can acknowledge that LLM-fuelled misinformation is a problem, but don’t lump “all AI” together and then deny the very obvious positive impact other applications have had (e.g. in healthcare).

LLMs fucking suck. But there are things that don’t suck. AI chess engines have entirety changed the game, AI protein predictors have made designer drugs and nanobots come within our grasp.

It’s just that tech bros want to grab quick cash from us peasants and that somehow equates to integrating chat gpt into everything. The most moronic of AI has become their poster child. It’s like if we asked people what a US president is like in character and everybody showed Trump to them as an example.

In what way is a chess engine meaningfully “intelligent”?

He’s already given you 5

examplesanecdotes of positive impact.Who’s upvoting this? Is Lemmy really this scientifically illiterate?

those aren’t examples they’re hearsay. “oh everybody knows this to be true”

You are ignoring ALL of the of the positive applications of AI from several decades of development, and only focusing on the negative aspects of generative AI.

generative AI is the only “AI”. everything that came before that was a thought experiment based on the human perception of a neural network. it’d be like calling a first draft a finished book.

if you consider the Turing Test AI then it blurs the line between a neural net and nested if/else logic.

Here is a non-exhaustive list of some applications:

- In healthcare as a tool for earlier detection and prevention of certain diseases

great, give an example of this being used to save lives from a peer reviewed source that won’t be biased by product development or hospital marketing.

- For anomaly detection in intrusion detection system, protecting web servers

let’s be real here, this is still a golden turd and is more ML than AI. I know because it’s my job to know.

- Disaster relief for identifying the affected areas and aiding in planning the rescue effort

hearsay, give a creditable source of when this was used to save lives. I doubt that AI could ever be used in this way because it’s basic disaster triage, which would open ANY company up to litigation should their algorithm kill someone.

- Fall detection in e.g. phones and smartwatches that can alert medical services, especially useful for the elderly.

this dumb. AI isn’t even used in this and you know it. algorithms are not AI. falls are detected when a sudden gyroscopic speed/ direction is identified based on a set number of variables. everyone falls the same when your phone is in your pocket. dropping your phone will show differently due to a change in mass and spin. again, algorithmic not AI.

- Various forecasting applications that can help plan e.g. production to reduce waste. Etc…

forecasting is an algorithm not AI. ML would determine the percentage of an algorithm is accurate based on what it knows. algorithms and ML is not AI.

There have even been a lot of good applications of generative AI, e.g. in production, especially for construction, where a generative AI can the functionally same product but with less material, while still maintaining the strength. This reduces cost of manufacturing, and also the environmental impact due to the reduced material usage.

this reads just like the marketing bullshit companies promote to show how “altruistic” they are.

Does AI have its problems? Sure. Is generative AI being misused and abused? Definitely. But just because some applications are useless it doesn’t mean that the whole field is.

I won’t deny there is potential there, but we’re a loooong way from meaningful impact.

A hammer can be used to murder someone, that does not mean that all hammers are murder weapons.

just because a hammer is a hammer doesn’t mean it can’t be used to commit murder. dumbest argument ever, right up there with “only way to stop a bad guy with a gun is a good guy with a gun.”

generative AI is the only “AI”. everything that came before that was a thought experiment based on the human perception of a neural network. it’d be like calling a first draft a finished book.

You clearly don’t know much about the field. Generative AI is the new thing that people are going crazy over, and yes it is pretty cool. But it’s built on research into other types of AI-- classifiers being a big one-- that still exist in their own distinct form and are not simply a draft of ChatGPT. In fact, I believe classification is one of the most immediately useful tasks that you can train an AI for. You were given several examples of this in an earlier comment.

Fundamentally, AI is a way to process fuzzy data. It’s an alternative to traditional algorithms, where you need a hard answer with a fairly high confidence but have no concrete rules for determining the answer. It analyzes patterns and predicts what the answer will be. For patterns that have fuzzy inputs but answers that are relatively unambiguous, this allows us to tackle an entire class of computational problems which were previously impossible. To summarize, and at risk of sounding buzzwordy, it lets computers think more like humans. And no, for the record, it has nothing to do with crypto.

Nobody here will give you peer-reviewed articles because it’s clear that your position is overconfident for your subject knowledge, so the likelihood a valid response will change your mind is very small, so it’s not worth the effort. That includes me, sorry. I can explain in more detail how non-generative AI works if you’d like to know more.

not once did I mention ChatGPT or LLMs. why do aibros always use them as an argument? I think it’s because you all know how shit they are and call it out so you can disarm anyone trying to use it as proof of how shit AI is.

everything you mentioned is ML and algorithm interpretation, not AI. fuzzy data is processed by ML. fuzzy inputs, ML. AI stores data similarly to a neural network, but that does not mean it “thinks like a human”.

if nobody can provide peer reviewed articles, that means they don’t exist, which means all the “power” behind AI is just hot air. if they existed, just pop it into your little LLM and have it spit the articles out.

AI is a marketing joke like “the cloud” was 20 years ago.

Classification =/= intelligence.

My spell checker can classify incorrectly spelled words. Is that intelligence? The whole field if a phony grift.

AI is a way to

process fuzzy datasell fuzzy statistics.Nobody here will give you peer-reviewed articles

Nuf said.

When I hear “AI”, I think of that thing that proofreads my emails and writes boilerplate code. Just a useful tool among a long list of others. Why would I spend emotional effort hating it? I think people who “hate” AI are just as annoying as the people pushing it as the solution to all our problems.

I don’t think anybody hates spell checkers if that’s what you consider “AI”.

It’s more about the grifters pushing this phony “intelligence” as the savior/destroyer of humanity.

when I get an email written by AI, it means the person who sent it doesn’t deem me worth their time to respond to me themselves.

I get a lot of email that I have to read for work. It used to be about 30 a day that I had to respond to. now that people are using AI, it’s at or over 100 a day.

I provide technical consulting and give accurate feedback based on my knowledge and experience on the product I have built over the last decade and a half.

if nobody is reading my email why does it matter if I’m accurate? if generative AI is training on my knowledge and experience where does that leave me in 5 years?

business is built on trust, AI circumvents that trust by replacing the nuances between partners that grow that trust.

Hi there GreenKnight23!

I don’t think it’s necessarily you personally they find a waste of time… it might be the layers of fluff that most business emails contain. They don’t know if you’re the sort of person who expects it.

Best Regards,

Explodicle<br> Internet Comment Writer<br> sh.itjust.works

it’s not even impressive enough to make a positive world impact in the 2-3 years it’s been publicly available.

It literally just won people two Nobel prizes

How does that help the rest of us?

It allows us to predict the structure of proteins before we make them. This can speed up research into protein-based medical treatments by astronomical amounts-- drugs which took years to develop through trial and error and/or thousands of hours of computational power can now be predicted beforehand in terms of their structure, which allows us to predict how they interact woth the proteins in our body. It’s an incredible breakthrough in the speed of medical research.

with the compute power required for models like alphafold, my guess is it will be at the monopoly of some corporation which will charge exorbitant prices for any drugs it develops through AI. Not a fault of AI itself, just fucking parasitic shareholder pigs which we should have eaten long ago.

Damn I hope not but yeah probably :(

I am talking about them winning the award

That’s a bad faith question, but I’ll answer it anyways. It helps us because it means that we may now use the discoveries that won them the award.

It’s hype like this that breaks the back of the public when “AI doesn’t change anything”. Don’t get me wrong: AlphaFold has done incredible things. We can now create computational models of proteins in a few hours instead of a decade. But the difference between a computational model and the actual thing is like the difference between a piece of cheese and yellow plastic: they both melt nicely but you’d never want one of them in your quesadilla.

Hooray for them. \s

If I just hand wave all the good things and call them bullshit, AI is nothing more than bad things!

- Lemmy

Current “AI’s” (LLMs) are only useful for art and non-fact based text. The two things people particularly do not need computers to do for them.

Llms fucking suck. But that’s the worst kind of ai. It’s just an autocorrect on steroids. But you know what a good ai is? The one that give an amino acid sequence predicts it’s 3d structure. It’s mind boggling. We can design personal protein robots with that kind of knowledge.

I agree, but the reason for all the AI hate is companies pushing LLMs, calling them AIs, and treating them like some miracle that is worth all the energy they consume. If we try to differentiate between LLMs and AI then the AI hate will go away.

Exactly. Llms are a mockery of this tech. They have become so powerful because of the amount of compute we have invested in it. Think if Alpha fold got that much gpu time and de’s working how amazing it would be. Heck we need to understand the human genome whose only 1-2% is understood. Apply AI to that instead of an AI that spouts out cheap Shakespeare knockoffs and shitty code.

I complete agree, but the original question was why people hate on AIs so much, and the reason is LLMs

Removed by mod

Frfr no cap

If I just hand wave all the bad things and call them amazing, AI is everything but the bad things!

cryptobrosAI evangelists

What do you think AI has to do with crypto, other than that they are both technologies which have entered the mainstream recently and been pushed hard? Like, what do they actually have in common?

People think they’re bad /s ;)

This but unironically

I think there are some fair reasons, and some… Just ignorant parroted opinions lol

the crypto scam ended when the AI scam started. AI conveniently uses the same/similar hardware that crypto used before the bubble burst.

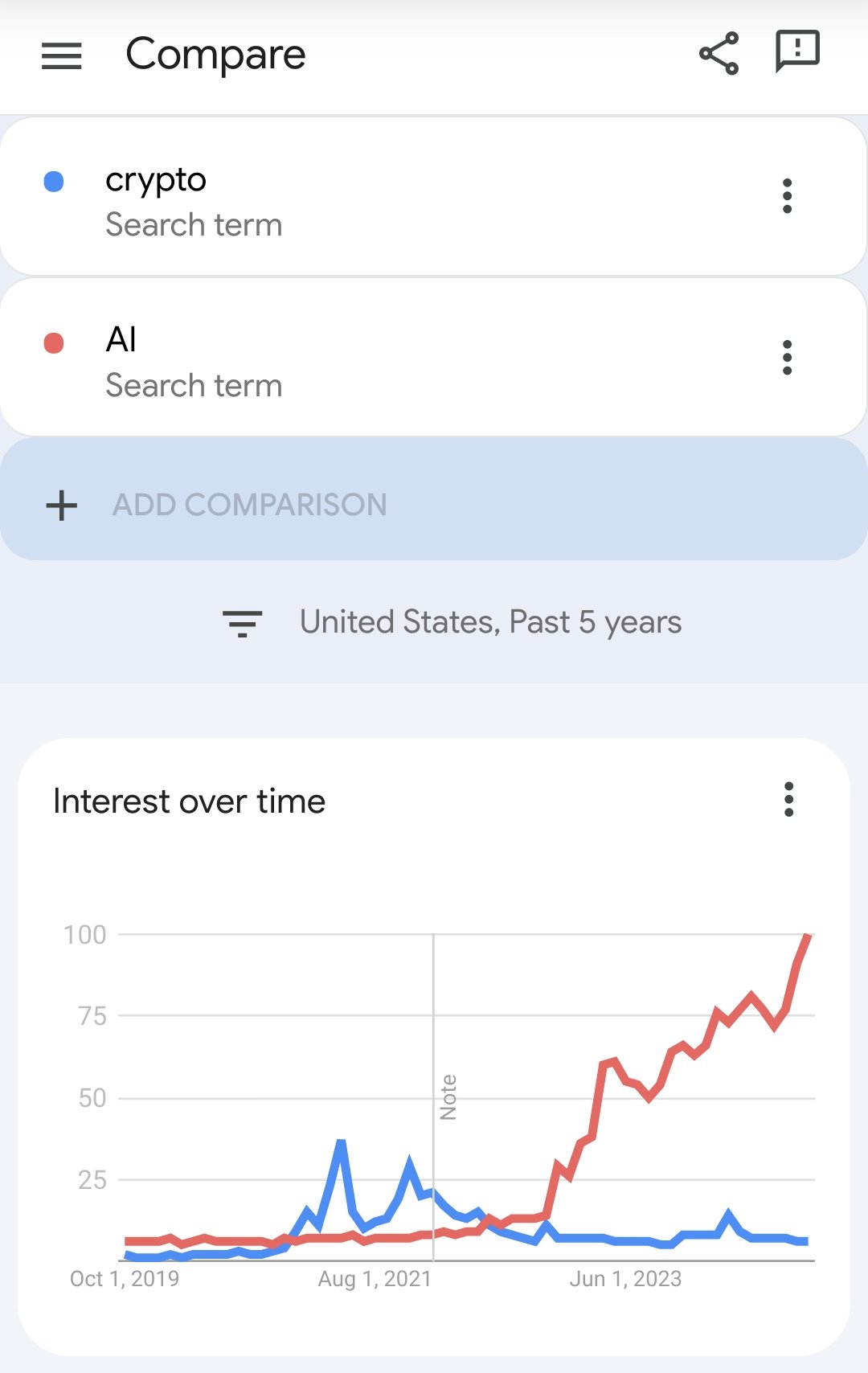

that not enough? take a look at this google trends that shows when interest in crypto died AI took off.

so yeah, there’s a lot more that connects the two than what you’d like people to believe.

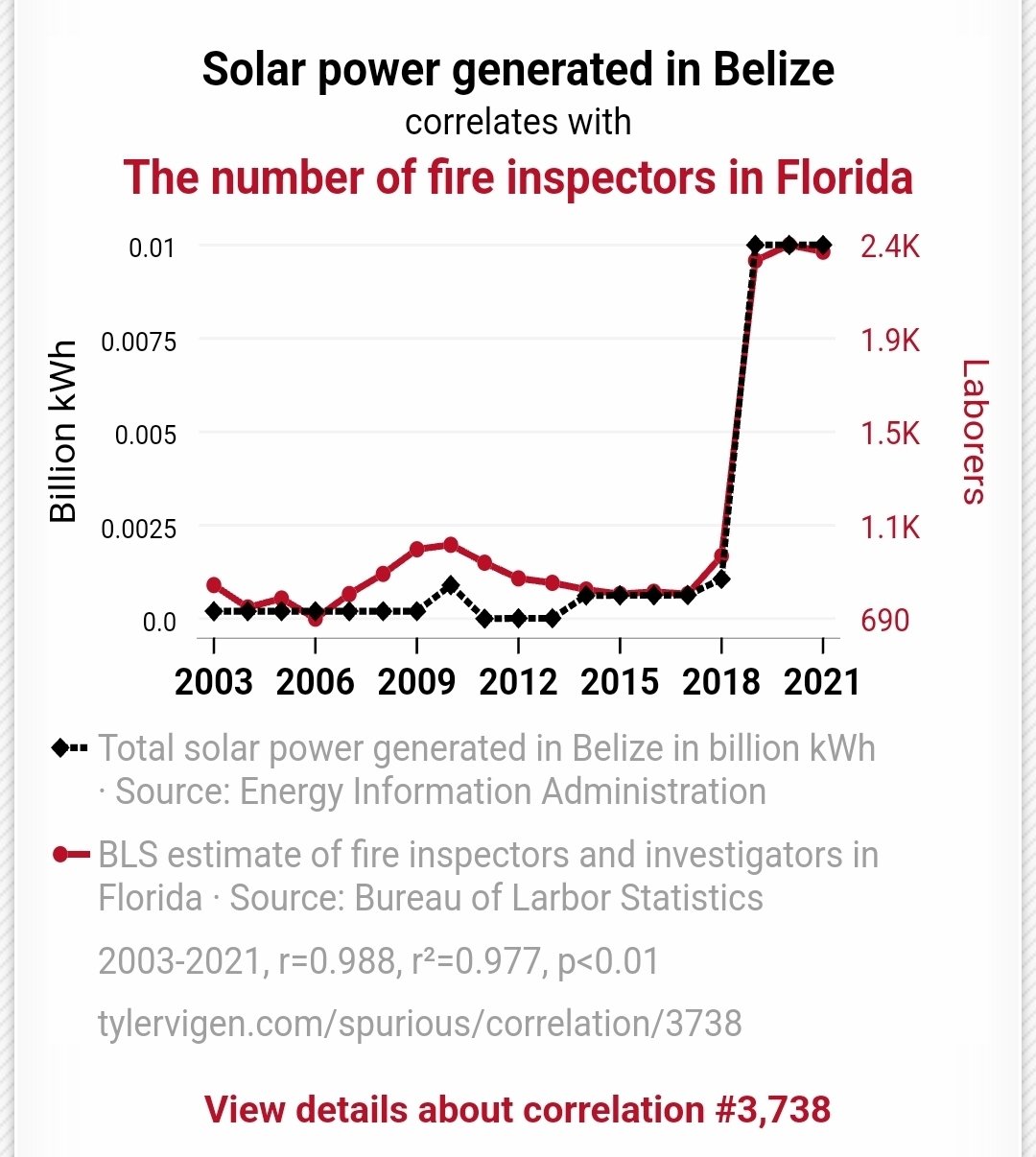

Hold the phone, I found two things that are even more closely related

The fact that they both use the GPU is mildly interesting, but means nothing beyond conspiracy theories. These events were set in motion decades ago, it’s not like AI was invented because Crypto died.

spoiler

Yes, I am aware of the irony of the website I got that chart from now using GenAI everywhere. That doesn’t make my point less true though.

If AI image generation is so bad why we have so many etsy and patreon artists complaining about it?

If no one would use it because it is so bad why would anyone care that it is trained on their products?

Do you know this joke about MAGA and the Schrodinger’s immigrant? They somehow believe that immigrants are both stealing people jobs and lazy and living on wellfare.

AntiAI bros are somehow similar. AI is at the same time stealing artists jobs and completely useless incapable of producing nothing that people would want.

I would love AI. Still waiting for it. Probably 50 years away (if human society lasts that long).

What I hate is the term being yet another scientific term to get stolen and watered down by brainless capitalists so they can scam money out of other brainless capitalists.

What I hate is the term being yet another scientific term

to get stolen and watered downcreated bybrainless capitalistsresearchers and scientists so they canscam money out ofdescribe ideas to otherbrainless capitalistsresearchers and scientists.The only place where AI is used to mean a artificial intelligence on the same level of humans is in fucking science fiction.

Is it hard to comprehend that when people say AI on the topic of something made by computer scientists they refer to the thing computer scientists call AI?

Do you go on gaming conversations and say: “Um… Akshually… it’s not AI… it’s just a behaviour heuristics 🤓”

“Brainless capitalists” weren’t invented in 2019.

Oh yes, Alan Turing, such a famous capitalist.

I suppose you did say more than fifty years, which technically includes someone that died in 1954, but he also defined it in a way that even current models don’t meet, so here we are, back at brainless capitalists.

they will automate all menial jobs, fire %90 of the workers and ask remaining %10 to oversee the AI automated tasks while also doing all other tasks which can not be automated. all so that shareholders can add some more billions on top of their existing stack of billions.

Sure, Eli Whitney.

How about the machines automate the complicated jobs to make as many menial jobs for me as possible? Computers these days are all lazy. They could optimize scheduling so the neighbors and I all get time together and time apart for a hundred hours of kicking dirt down at the office each year, instead they hang around doing vapes and abstract paintings of hands.

For me, it’s because AI is referring to a LLM, which is not AI. Also, these LLMs use a crap load of energy to do things that we can currently do ourselves for much less energy.

But actual AI? Yes, please!

Today I learned about AI agents in the news and I just can think: Jesus. The example shown was of an AI agent using voice synthesis to bargain against a human agent about the fee for a night in some random hotel. In the news, the commenter talked about how the people could use this agents to get rid of annoying, reiterative, unwanted phone calls. Then I remembered about that night my in-laws were tricked to give their car away to robbers because they

thoughtwere told my sister in law was kidnapped, all through a phone call.Yeah, AI agents will free us all from invasive megacorporations. /s

Why isn’t anyone saying that AI and machine learning are (currently) the same thing? There’s no such thing as “Artificial Intelligence” (yet)

Its more like intelligience is very poorly defined so a less controversial statement is that Artificial General Intelligience doesn’t exist.

Also Generative AI such as LLMs are very very far from it, and machine learning in general haven’t yielded much result in the persuit of sophonce and sapience.

Although they technically can pass a turing test as long as the turing test has a very short time limit and turing testers are chosen at random.

I work in an ML-adjacent field (CV) and I thought I’d add that AI and ML aren’t quite the same thing. You can have non-learning based methods that fall under the field of AI - for instance, tree search methods can be pretty effective algorithms to define an agent for relatively simple games like checkers, and they don’t require any learning whatsoever.

Normally, we say Deep Learning (the subfield of ML that relates to deep neural networks, including LLMs) is a subset of Machine Learning, which in turn is a subset of AI.

Like others have mentioned, AI is just a poorly defined term unfortunately, largely because intelligence isn’t a well defined term either. In my undergrad we defined an AI system as a programmed system that has the capacity to do tasks that are considered to require intelligence. Obviously, this definition gets flaky since not everyone agrees on what tasks would be considered to require intelligence. This also has the problem where when the field solves a problem, people (including those in the field) tend to think “well, if we could solve it, surely it couldn’t have really required intelligence” and then move the goal posts. We’ve seen that already with games like Chess and Go, as well as CV tasks like image recognition and object detection at super-human accuracy.

that heavily depends on how you define “intelligence”. if you insist on “think, reason and behave like a human”, then no, we don’t have “Artificial Intelligence” yet (although there are plenty of people that would argue that we do). on the other hand if you consider the ability to play chess or go intelligence, the answer is different.

Honestly I would consider BFS/DFS artificial intelligence (and I think most introductory AI courses agree). But yea it’s a definition game and I don’t think most people qualify intelligence as purely human-centric. Simple tasks like pattern recognition already count as a facet of intelligence.

I forget the exact quote or who said it, but the gist is that a species cannot be considered sapient (intelligent) on an interplanetary/interstellar stage until they have discovered Calculus. I prefer to use that as my bar for the sapience of those around me as well.

Weeeell, sheeiiiiit

It very much depends on what you consider AI, or even what you consider intelligence. I personally consider LLMs AI because it’s artificial.

currently

There is also ancient thing called expert system

A I!